This is a ready-to-print page

UX case studies that changed the world and inspire design decisions

Critical lessons from design failures, ethical breakdowns, and system mistakes

Some of the most important UX lessons do not come from polished success stories, but from failures with serious human, financial, and ethical consequences. These case studies show how interface design, transparency, feedback, error handling, and trust can shape real-world outcomes far beyond the screen.

I study these examples as part of my wider design thinking practice. They help me reflect on how systems behave under pressure, how users respond in moments of uncertainty, and why responsible design matters in both critical services and everyday consumer experiences.

The challenge

The challenge in learning from these cases is to look beyond the surface and understand the deeper design patterns behind each failure. In many of these examples, the issue was not simply a technical bug or isolated business decision, but a breakdown in visibility, trust, safety, accountability, or ethical intent.

Studying these events through a UX lens helps reveal how poor feedback, misleading interfaces, hidden system logic, dark patterns, or weak fail-safes can lead to confusion, harm, and loss of trust. It also reinforces the responsibility designers have when shaping systems that influence human decisions.

My role

This page reflects my ongoing analysis of influential UX case studies and my interest in understanding how design decisions affect people in real contexts. I use these examples as thought pieces to inform my own approach to product design, service design, accessibility, ethics, and decision-making under pressure.

Rather than treating UX as purely visual, I see it as a discipline that shapes safety, trust, clarity, and behaviour. These case studies strengthen my ability to question assumptions, identify risk, and make more responsible design choices in both complex and everyday systems.

Process and approach

My approach is to review well-known examples of system failure or manipulation through a UX lens, identifying what went wrong, how users were affected, and what practical design lessons can be carried forward. Each case helps build a stronger understanding of interface clarity, user feedback, transparency, hierarchy, ethics, and accountability.

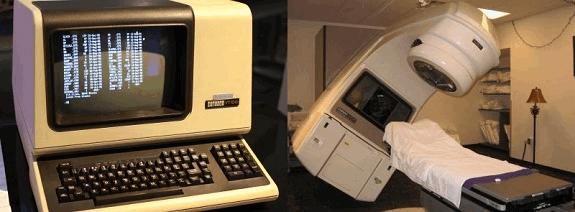

Therac-25

Therac-25 was a radiation therapy machine used in hospitals in the 1980s. A serious interface and safety design failure allowed operators to enter treatment settings too quickly, resulting in massive radiation overdoses that killed at least six patients and seriously injured others.

Source image: Wikipedia. Software compiled from source code available at source, Link

The UX/UI mistake

- The interface was confusing and misleading, allowing inputs to be entered faster than the system could safely register them.

- Error messages were unclear, and the system appeared to be functioning normally even when it was not.

- Important physical safety interlocks from earlier models had been removed in favour of software controls.

Lesson learned

- Never assume users will interact perfectly with a system.

- Clear feedback and fail-safes are essential in critical systems.

- Efficiency must never come at the expense of safety.

The Post Office Horizon scandal (UK)

The Horizon IT system used by the UK Post Office created false accounting shortfalls that led to hundreds of postmasters being wrongly accused of theft and fraud. The consequences included prison sentences, bankruptcies, reputational damage, and profound personal harm.

Source image: By Muhammad Karns - Judicial Office Twitter feed, CC BY-SA 4.0, Link

The UX/UI mistake

- The system surfaced financial discrepancies without giving users a meaningful way to verify or challenge them.

- Known system errors were not communicated transparently to the people affected.

- Workflows and data structures made it extremely difficult for users to trace or correct issues.

Lesson learned

- Accountability in UX is critical.

- Bad systems can destroy trust, livelihoods, and lives.

- Transparency must be designed into high-stakes services.

Boeing 737 MAX (2018)

Boeing introduced MCAS, a flight control system on the 737 MAX, without making pilots fully aware of how it worked. A single sensor failure could trigger repeated nose-down inputs, contributing to two fatal crashes that killed 346 people.

Source image: By PK-REN, Flickr, CC BY-SA 2.0, Link

The UX/UI mistake

- Pilots were not given adequate visibility into the presence and behaviour of the system.

- Alerts and recovery pathways were not sufficiently clear under pressure.

- Cost-saving decisions reduced training and transparency.

Lesson learned

- Training and transparency are part of the user experience.

- Critical systems must support recognition, not guesswork.

Three Mile Island nuclear disaster (1979)

A partial nuclear meltdown occurred at Three Mile Island after operators were overwhelmed by alarms and misled by interface indicators. Instead of helping people understand the situation, the system increased confusion during a moment of extreme pressure.

Source image: United States Department of Energy, Public Domain, Link

The UX/UI mistake

- Operators were bombarded with too many alarms without a clear hierarchy of urgency.

- A misleading indicator suggested a valve was closed when it was actually open.

- The system did not support fast, accurate decision-making in a high-stress environment.

Lesson learned

- Clear, actionable prioritisation saves lives.

- Interfaces must reduce cognitive overload, not add to it.

Volkswagen emissions scandal (2015)

Volkswagen used software to detect when vehicles were being tested for emissions and alter their behaviour accordingly. The result was a system that created a false appearance of environmental compliance while hiding the real impact.

Source image: Mariordo, CC BY-SA 3.0, Link

The UX/UI mistake

- The system presented misleading outputs that suggested the cars were cleaner than they really were.

- The deceptive behaviour was hidden from both regulators and users.

Lesson learned

- Ethical UX matters.

- Design can be used to obscure truth as well as reveal it.

McDonald’s touchscreens and hidden fees

Self-service kiosks were designed to increase order value, but some interactions made it difficult for users to remove extras or notice added charges clearly. This is often discussed as an example of dark patterns in mainstream digital experiences.

Source image: Dirk Tussing, Flickr, CC BY-SA 2.0, Link

The UX/UI mistake

- The interface encouraged extra spending by making removal options less visible.

- Price changes and added costs were not always surfaced with enough clarity.

Lesson learned

- Ethical UX should not manipulate users into unintended choices.

- Transparency is just as important in everyday services as in critical systems.

My contribution

My contribution here is analytical rather than delivery-based. By studying these cases, I strengthen my own design judgement and deepen my understanding of trust, ethics, safety, error handling, system feedback, and user vulnerability.

These lessons influence how I think about product decisions, service design, accessibility, and interface behaviour. They remind me that UX is never just about aesthetics or convenience. At its best, it helps people act with confidence and clarity. At its worst, it can mislead, exclude, or cause real harm.